gptdevelopers.io

Hire GPT Developers

Table of Contents:

Enterprise Code Audit for Performance, Security & Scale/

Enterprise Code Audit Framework for Performance, Security, and Scale

Your stack never drifts in just one dimension; performance, security, and scalability erode together. A rigorous code audit framework surfaces where complexity, latency, and risk accumulate-then turns those findings into automation. Done well, the audit becomes a product: observable, repeatable, enforceable across teams, time zones, and a global talent network. Whether you rely on in-house engineers, X-Team developers, or partners like slashdev.io, the goal is the same-codify high standards and make them default.

Phase 1: Baseline and Inventory

Start with a verifiable map, not guesses. Your first deliverable is a machine-readable inventory paired with operational baselines.

- Generate an SBOM (e.g., Syft) and dependency graph; note licenses, versions, and transitive risks.

- Export infrastructure-as-code plans and cloud runtime topology; mark public entry points and data flows.

- Collect SLOs, P95/P99 latencies, error budgets, and cost per request by service.

- Identify test coverage at critical paths; highlight untested auth, billing, and migration code.

Performance Deep-Dive

Focus on evidence, not intuition. Attach numbers to hotspots and add guardrails that prevent regression.

- Instrument end-to-end tracing (W3C Trace Context). Require spans for DB, cache, queue, and external calls.

- Capture flamegraphs at peak load; flag functions with >20% CPU or >10% wall time.

- Audit query plans; add composite indexes for top 10 slow queries; enforce query timeouts.

- Establish performance budgets per endpoint: P95 latency targets, memory ceilings, and payload size caps.

- Introduce a two-tier cache policy: request-level memoization plus distributed cache with TTL aligned to data staleness tolerance.

Case example: A fintech service reduced P95 latency by 41% by eliminating an ORM N+1 on account summaries, moving a JSON aggregation to SQL with a covering index, and streaming responses to cut TTFB.

Security Surface Mapping

Threats hide in default configurations and forgotten edges. Treat security as code with auditable controls.

- Run dependency scanning with CVE gating; block builds on high-severity issues lacking compensating controls.

- Apply secrets scanning on commits and images; rotate keys automatically on detection.

- Codify baseline policies: default-deny egress, IMDSv2 only, and mutual TLS for east-west traffic.

- Combine SAST, DAST, and IAST; require fix verification via proof-of-exploit tests where feasible.

- Enforce least privilege via IaC policy-as-code; diff drift nightly and alert on new public resources.

Example: An e-commerce platform prevented SSRF by restricting metadata IP ranges, enforcing egress via a proxy with DNS pinning, and sanitizing URL fetchers to explicit allowlists.

Scalability and Reliability

Scale is not just capacity; it is predictability under stress. Model backpressure and failure domains first.

- Load-profile by traffic mode (steady, spiky, burst). Validate autoscaling signals on queue depth and utilization, not CPU alone.

- Introduce circuit breakers, retries with jitter, and idempotency keys across external integrations.

- Partition stateful services by tenant or region; verify shard rebalancing is deterministic.

- Practice chaos experiments on off-peak windows; assert time-to-recovery SLOs are met.

Result: A video platform cut 30% infra spend by modeling queue throughput, replacing fan-out websockets with topic-based multiplexing, and shifting cold transcoding to spot fleets with graceful fallback.

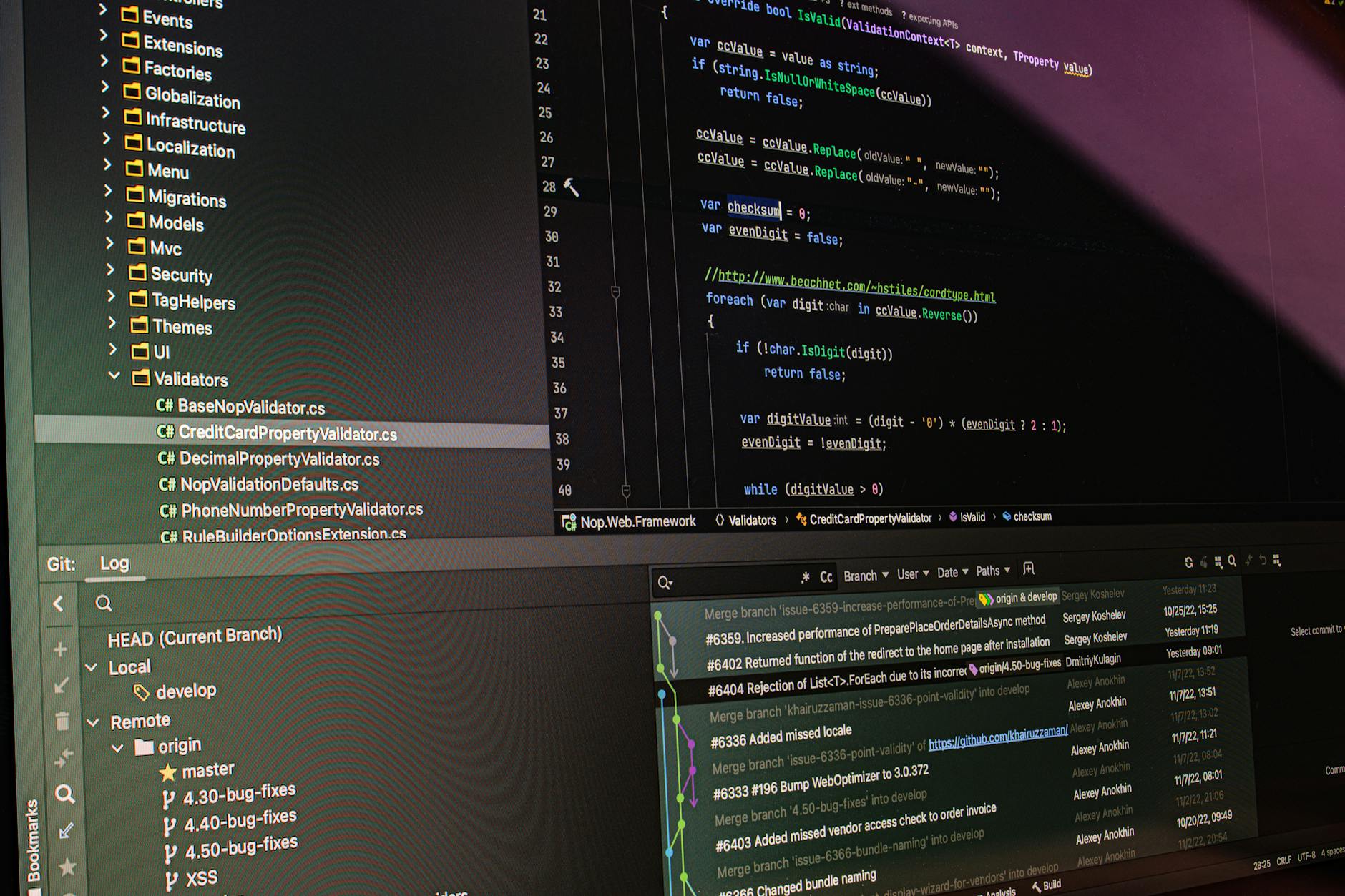

CI/CD Pipeline Setup and Automation as Audit Enforcer

Codify findings so they persist beyond slide decks. CI/CD pipeline setup and automation must convert audit rules into merge-blocking checks and progressive delivery.

- Pre-commit: run linters, secrets scan, and dependency diffs; stop issues before PRs exist.

- PR stage: spin ephemeral environments; execute performance smoke (50 rps), SAST/DAST, and policy checks.

- Gates: fail if P95 regresses >5%, coverage drops below thresholds on critical paths, or high CVEs appear without waivers.

- Release: canary 5%-20%-50%-100% with automated rollback on error-budget burn or anomaly detection.

- Continuous verification: compare live SLOs to PR baselines; create tickets automatically for drift.

Teaming Model: Global Talent Network

Audits accelerate when specialists pair with owners. Tap a global talent network to fill targeted gaps-database tuning one week, IaC policy hardening the next. X-Team developers are effective when embedded with clear audit artifacts, while partners like slashdev.io bring seasoned remote engineers and agency discipline to convert findings into scalable patterns.

Artifacts, Scoring, and Roadmap

End with assets that drive change: a scored heatmap per service (performance, security, scalability), a risk-adjusted ROI model, and a 90-day roadmap. Score on measurable deltas-latency, exploitability, blast radius, cost per request-and tie each action to CI gates so wins are preserved.

Quick Wins Checklist

- Add request IDs and trace context to every log line.

- Block public S3 buckets and require TLS 1.2+ everywhere.

- Set timeouts and retries on all outbound calls; no infinite waits.

- Introduce read replicas and connection pooling; cap pool size per pod.

- Compress JSON responses; paginate and stream large payloads.

- Implement dependency update bots with auto-merge for safe patches.

- Enforce JWT audience/issuer validation; rotate signing keys.

- Adopt resource quotas and HPA based on custom metrics.

- Run weekly flamegraphs on production-like load; track diffs.

- Automate rollback with canary health checks tied to SLOs.

A disciplined audit is less about commentary and more about codified guarantees. When every insight becomes a test, a policy, or a metric gate, your stack stops drifting-and starts compounding reliability, speed, and trust.